For the past year, SealingTech’s Innovation Team has been working on an open source side-project called Expandable Defensive Cyber Operations Platform (EDCOP), with the goal of building a highly scalable containerized network security platform. I always tell people that if they want to try it on hardware, they need to get an Intel X710 or XL710 network card. One of my co-workers suggested I write a quick blog about why we say it’s such a necessity.

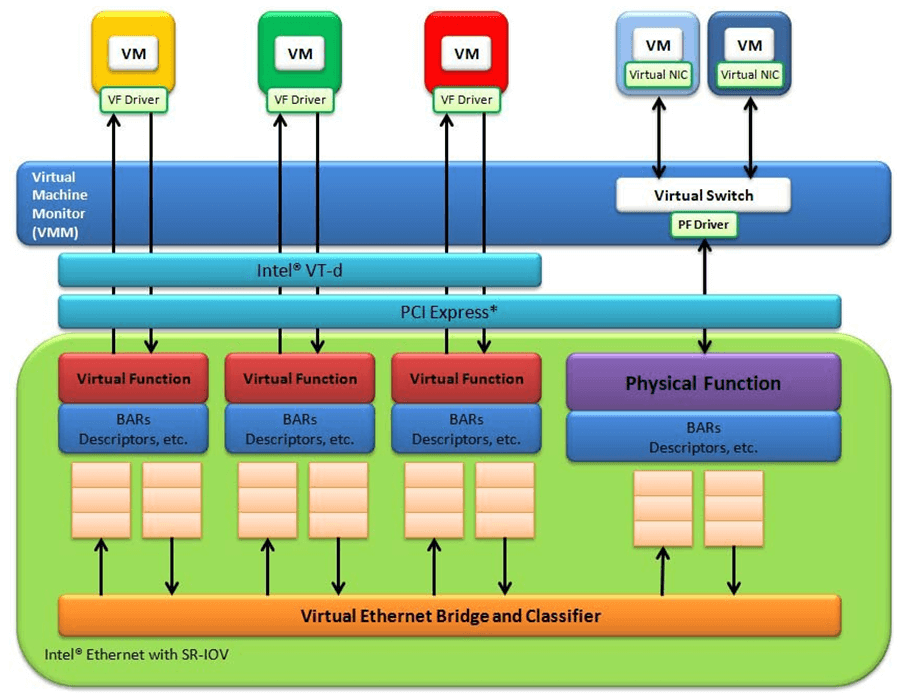

About 18 month ago, I was researching how to run a high-speed multi-tenant IDS system with as little hardware as possible. I ended up briefing the concepts/findings at Bro4Pros 2017. The proof-of-concept was to use docker containers, one per tenant, each monitoring a different VLAN. SR-IOV (released publicly in 2008) turns out to be the perfect solution for this job. It allows you to divide a physical NIC into multiple virtual functions, which the operating system sees as individual NICs.

*Shameless copied from the DPDK docs (https://doc.dpdk.org/guides/nics/intel_vf.html)

SR-IOV allows you to associate each virtual NIC with a VLAN, and the hardware chipset will strip the VLAN header and send the packet to the virtual NIC. It’s heavily used in the virtualization world due to its ability to effectively skip the processing of the hypervisor and send packets directly into VMs and containers.

A lot of NIC manufacturers and chipsets support SR-IOV, so why are we so insistent on the Intel X710/XL710 (no, we don’t get a kick-back when someone buys one)? Network monitoring solutions such as Bro, Suricata, Argus, and others, need the interface to be in Promiscuous mode so they can see every packet on the wire. That’s mainly because security people are addicted to data and don’t trust anyone… not even themselves. Since SR-IOV was originally designed for virtualization in multi-tenant environments, they didn’t allow for setting a virtual function in promiscuous mode. This was seen as a “security feature” to prevent VMs from snooping on each other’s traffic in a multi-tenant cloud environment (a security feature that limits security monitoring… it’s like TLS with PFS all over again).

The Intel X710/XL710 Adapter

At the time of writing this, I’ve only seen 1 “commodity” Network Card that supports SR-IOV in promiscuous mode: The Intel X710/XL710. I’m a big fan of these cards (seriously, not getting paid to say this). Both versions use the same 40Gbps chipset. The main difference between them is the X710 has (4) x SFP+ ports and the XL710 has (2) x QSFP+ ports. The XL710 has a smaller profile, so it fits nicely inside condensed spaces like a small Supermicro appliance or multi-node server chassis. WARNING: Although the XL710 has two 40Gbps QSFP+ ports, it CAN’T do 80 Gbps. The chipset and PCI-bus will limit you to ~45Gbps total. The card is really designed for Active/Standby use.

X710-DA4

XL710-QDA2

Playing with SR-IOV and Promiscuous Mode on the X710/XL710

Okay, so now you’ve dropped the ~$450 on your new card. How do you enable SR-IOV, put it into promiscuous mode, and start shooting VLANs into your containers at 10Gbps+? First, you need to ensure your Linux kernel/iproute2 supports it (v4.5 or higher kernel). CentOS/RHEL 7.3+ are also good to go (Red Hat back-ported the patch).

In order to enable SR-IOV in the kernel, you need to turn on the Intel VT-d mode in the BIOS and the kernel. To enable in the kernel, add “intel_iommu=on iommu=pt” to the kernel boot parameters:

#vim /etc/default/grub

<snip>

GRUB_CMDLINE_LINUX="crashkernel=auto rd.lvm.lv=centos/root rd.lvm.lv=centos/swap rhgb quiet intel_iommu=on iommu=pt "

<snip>

# grub2-mkconfig -o /boot/grub2/grub.cfg

# reboot

To create the virtual interfaces, simply echo the number of them into a driver setting called “sriov_numvfs” for the device you want:

#echo 0 > /sys/class/net/ens2f0/device/sriov_numvfs

The new interfaces should show up in `ifconfig` immediately. You can now assign VLANs and promiscuous mode using the `ip link` command:

#ip link set dev eth0 vf 1 vlan 100

#ip link set dev eth0 vf 1 trust on (enables promiscuous mode on that VF)

To Use or Not to Use SR-IOV… That is the question

While SR-IOV can be a powerful option for speeding up Network Function Virtualization, it isn’t well suited for every scenario. As an example, while chaining containers/VMs together in a row is technically possible using SR-IOV, it wouldn’t be very effective after 2-3 of them. This is because each packet that goes between the VMs would need to be sent back to the network card VF, processed, and sent back across the PCIe bus to the memory. It’s best to stick with North/South traffic over the VF and have a vSwitch (preferably DPDK-backed) for East/West traffic between VMs on the same host. Intel has a great paper titled “SR-IOV for NFV Solutions: Practical Considerations and Thoughts” that goes into depth on the reasons why and some of the testing they’ve done.

If you are interested in NFV solutions that use containers, check out the Expandable DCO Platform (EDCOP) open source project we’ve been working on. EDCOP uses Kubernetes to deploy and orchestrate different network defense tools such as IPSs, IDSs, and SIEM from a centralized dashboard.

Related Articles

Transforming Cyber Challenges into Real-World Customer Solutions

Intuitive and skilled problem solvers, SealingTech engineers design and build defensive cyber solutions for challenging and unpredictable environments where critical missions are at stake. They tackle issues that directly impact…

How Geopolitics Defines Cybersecurity for Critical Infrastructure

Geopolitics and cybersecurity increasingly converge. State-sponsored hackers target critical infrastructure as part of broader international competition. Governments use cyber operations for espionage, influence, and sabotage to apply pressure without kinetic…

Could your news use a jolt?

Find out what’s happening across the cyber landscape every month with The Lightning Report.

Be privy to the latest trends and evolutions, along with strategies to safeguard your government agency or enterprise from cyber threats. Subscribe now.